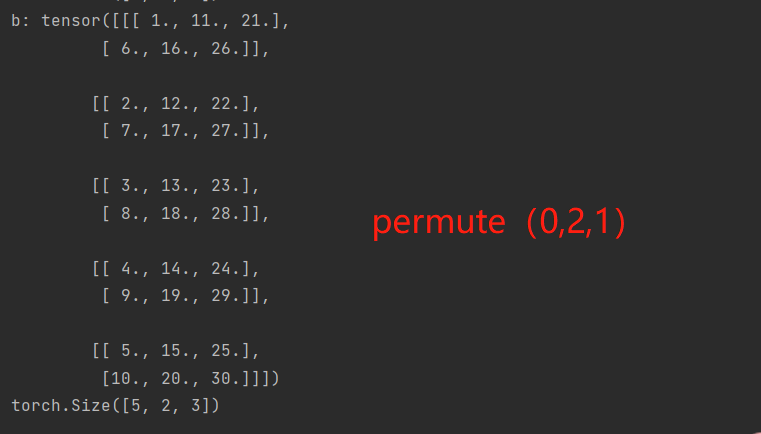

Pad_value ( float, optional) – Value for the padded pixels. generator ( torch.Generator, optional) a pseudorandom number generator for sampling. Images separately rather than the (min, max) over all images. Returns a random permutation of integers from 0 to n - 1. Scale_each ( bool, optional) – If True, scale each image in the batch of Then these numbers are used to normalize the image. There are two points where the dimensions of tensors are permuted using the permute function. Value_range ( tuple, optional) – tuple (min, max) where min and max are numbers, Use of PyTorch permute in RCNN Ask Question Asked 2 years, 6 months ago Modified 2 years, 6 months ago Viewed 2k times 3 I am looking at an implementation of RCNN for text classification using PyTorch. Normalize ( bool, optional) – If True, shift the image to the range (0, 1),īy the min and max values specified by value_range. Padding ( int, optional) – amount of padding. Nrow ( int, optional) – Number of images displayed in each row of the grid. Or a list of images all of the same size. Tensor ( Tensor or list) – 4D mini-batch Tensor of shape (B x C x H x W) make_grid ( tensor : Union ], nrow : int = 8, padding : int = 2, normalize : bool = False, value_range : Optional ] = None, scale_each : bool = False, pad_value : float = 0.0 ) → Tensor ¶ Sparse ( bool, optional) – See module initialization documentation.Make_grid ¶ torchvision.utils. Scale_grad_by_freq ( bool, optional) – See module initialization documentation. Norm_type ( float, optional) – See module initialization documentation. Max_norm ( float, optional) – See module initialization documentation. Parameters :Įmbeddings ( Tensor) – FloatTensor containing weights for the Embedding.įirst dimension is being passed to Embedding as num_embeddings, second as embedding_dim.įreeze ( bool, optional) – If True, the tensor does not get updated in the learning process.Įquivalent to _grad = False. weight Parameter containing: tensor(,, ], requires_grad=True) classmethod from_pretrained ( embeddings, freeze = True, padding_idx = None, max_norm = None, norm_type = 2.0, scale_grad_by_freq = False, sparse = False ) ¶Ĭreates Embedding instance from given 2-dimensional FloatTensor. weight Parameter containing: tensor(,, ], requires_grad=True) > with torch. Embedding ( 3, 3, padding_idx = padding_idx ) > embedding. LongTensor (]) > embedding ( input ) tensor(,, , ]]) > # example of changing `pad` vector > padding_idx = 0 > embedding = nn. Embedding ( 10, 3, padding_idx = 0 ) > input = torch. LongTensor (, ]) > embedding ( input ) tensor(,, , ],, ,, ]]) > # example with padding_idx > embedding = nn. Embedding ( 10, 3 ) > # a batch of 2 samples of 4 indices each > input = torch. > # an Embedding module containing 10 tensors of size 3 > embedding = nn. Initialized from N ( 0, 1 ) \mathcal H = embedding_dim omnumpy(ytrain).long() omnumpy(xtest).float() ytest omnumpy(ytest).long() ytrain xtest Permute dimensions for PyTorch. Weight ( Tensor) – the learnable weights of the module of shape (num_embeddings, embedding_dim) See Notes for more details regarding sparse gradients. Sparse ( bool, optional) – If True, gradient w.r.t. How is permutation implemented in PyTorch cuda FeiWang1 (Fei Wang) March 5, 2019, 5:32pm 1 Hi, I am interested to find out how PyTorch cuda implement permutations. Scale_grad_by_freq ( bool, optional) – If given, this will scale gradients by the inverse of frequency of Norm_type ( float, optional) – The p of the p-norm to compute for the max_norm option. Max_norm ( float, optional) – If given, each embedding vector with norm larger than max_norm The embedding vector at padding_idx will default to all zeros,īut can be updated to another value to be used as the padding vector. Therefore, the embedding vector at padding_idx is not updated during training, The size of the returned tensor remains the same as that of the original. Padding_idx ( int, optional) – If specified, the entries at padding_idx do not contribute to the gradient PyTorch torch.permute () rearranges the original tensor according to the desired ordering and returns a new multidimensional rotated tensor. Num_embeddings ( int) – size of the dictionary of embeddingsĮmbedding_dim ( int) – the size of each embedding vector The input to the module is a list of indices, and the output is the corresponding This module is often used to store word embeddings and retrieve them using indices.

Embedding ( num_embeddings, embedding_dim, padding_idx = None, max_norm = None, norm_type = 2.0, scale_grad_by_freq = False, sparse = False, _weight = None, _freeze = False, device = None, dtype = None ) ¶Ī simple lookup table that stores embeddings of a fixed dictionary and size. Extending torch.func with autograd.FunctionĮmbedding ¶ class torch.nn.CPU threading and TorchScript inference.CUDA Automatic Mixed Precision examples.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed